Concepts Of Uniformly Most Accurate (UMA) – Statistics Notes – For W.B.C.S. Examination.

অবিচ্ছিন্নভাবে সর্বাধিক নির্ভুল (ইউএমএ) ধারণা – স্ট্যাটিসটিক্স নোট – WBCS পরীক্ষা।

Just like consistency of a sequence of estimators, Definition 1.1 is a basicnotion of “correctness”. In fact, most tests are consistent. Inthe rest of thesection, we refrain from presenting mathematically rigorous results becausethe level of the subject is such that it is difficult to state the even the assumptions without introducing additional technical concepts.Continue Reading Concepts Of Uniformly Most Accurate (UMA) – Statistics Notes – For W.B.C.S. Examination.

Consider testing H0 : θ = θ0 versus H1 : θ 6= θ0 by a Wald test. That is,

consider the test

ϕ(x) = 1(χ2

p,α,∞)

kwnk

2

2

,

where wn is the Wald test statistic:

(1.1) wn := √

nVb

− 1

2

n

ˆθn − θ0

(

ˆθn is an asymptotically normal estimator of θ, and Vbn is a consistent estimator of its asymptotic variance). The power is

β(θ) = Pθ

kwnk

2

2 ≥ χ

2

p,α

At any θ1 6= θ0, the Wald statistic diverges. Indeed,

√

nVb

− 1

2

n

ˆθn − θ0

=

√

nVb

− 1

2

n

ˆθn − θ1

+

√

nVb

− 1

2

n

θ1 − θ0

.

We recognize the first term is OP (1) (ˆθn is asymptotically normal), but the

second term diverges. Thus the power tends to one. It is possible to show

that the LR and score tests are consistent by similar arguments.

Consistency ensures the power of a test grows to one as the sample size

grows. However, the rate of convergence is unclear. When we encountered a

similar problem when evaluating point estimators, we “blew up” the error

by √

n and studied the limiting distribution of

√

n

ˆθn − θ

∗

The analogous trick here is to study the limiting distribution of the Wald

statistic under a sequence of local alternatives:

(1.2) θn := θ0 + √

h

n

.

Formally, consider a triangular array

x1,1

x2,1 x2,2

.

xn,1 xn,2 . . . xn,n

.

where xn,i

i.i.d. ∼ Fθn

. We remark that observations in different rows of the array are not identically distributed. Let ˆθn be an asymptotically normal estimator of θn based on observations {xn,i}i∈[n]

. The Wald statistic is

√

nVb

− 1

2

n

ˆθn − θ0

=

√

nVb

− 1

2

n

ˆθn − θn

+ Vb

− 1

2

n h.

Intuitively, the first term converges in distribution to a N (0, Ip) random variable, and the second term converges to Avar

ˆθn

− 1

2 h. Thus

(1.3) √

nVb

− 1

2

n

ˆθn − θ0

d→ N

Avar

ˆθn

− 1

2 h, Ip

.

and the power function converges to

β(θn) → P

z + Avar

ˆθn

− 1

2 h

2

2

≥ χ

2

p,α

,

where z ∼ N (0, Ip). The preceding limit of the power function is called the

asymptotic power of the Wald test. Evidently, the larger Vb

− 1

2

n h is, the higher is the asymptotic power. Thus Wald tests based on efficient estimators

are more powerful. There is a similar story for the LR and score tests.

We remark that kz + µk

2

2

is distributed as a non-central χ

2

random variable: kµk

2

2

is the non-centrality parameter.

Example 1.2. Let xi

i.i.d. ∼ Ber(p). We wish to test H0 : p = p0 by a Wald

test. We know

1. the MLE of p is pˆn = x¯n,

2. the asymptotic variance of pˆn is p

If p 6= p0, the power of the Wald test is approximately

β(p) = Pp

√

√n(ˆpn−p0)

p(1−p)

2

≥ χ

2

1,α

= Pp

√

√n(ˆpn−p)

p(1−p)

+

√

√n(p−p0)

p(1−p)

2

≥ χ

2

1,α

≈ P

z +

√

√n(p−p0)

p(1−p)

2

≥ χ

2

1,α

,

where z is a standard normal random variable. The non-centrality parameter is n(p−p0)

2

p(1−p)

.

To illustrate use of the preceding approximate power function in a concrete setting, consider the design question: how many samples are required

to achieve 0.9 power against the alternative H1 : p1 = p0 + 0.1? By the properties of the non-central χ

2

1

distribution, to ensure

P

(z + µ)

2 ≥ χ

2

1,α

≥ 0.90,

the non-centrality parameter µ

2 must be at least 10.51. Recall the non-centrality parameter is n(p1−p0)

2

p1(1−p1)

. We solve for n to deduce

n >

10.51p1(1 − p1)

(p1 − p0)

2

= 262.65.

It is possible to rigorously justify (1.1) under suitable conditions by appeaing to the theory of local asymptotic normality, much of which was developed by Lucien Le Cam at Berkeley.

2. Interval estimation. In the first part of the course, we considered

the task of point estimation, where the goal is to provide a single point

that is a guess for the value of the unknown parameter. The goal of interval

estimation is to provide a set that contains the unknown parameter with

some prescribed probability.

Definition 2.1. Let C(x) ⊂ Θ be a set-valued random variable. It is a

1 − α-confidence set for a parameter θ if

Pθ(θ ∈ C(x)) ≥ 1 − α.

If C(x) is an interval on R, we call C(x) a confidence interval.

We emphasize that the set C(x) not the parameter θ is the random quantity in Definition 2.1. Observing

l(x), u(x)

= [l, u] should not be interpreted as “θ ∈ [l, u] with probability at least 1 − α”: θ

Our own publications are available at our webstore (click here).

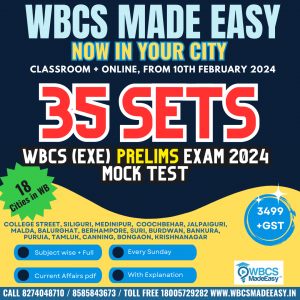

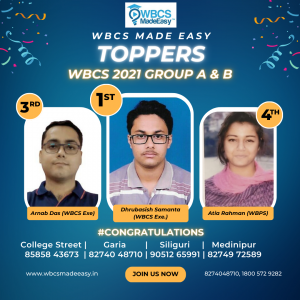

For Guidance of WBCS (Exe.) Etc. Preliminary , Main Exam and Interview, Study Mat, Mock Test, Guided by WBCS Gr A Officers , Online and Classroom, Call 9674493673, or mail us at – mailus@wbcsmadeeasy.in

Visit our you tube channel WBCSMadeEasy™ You tube Channel

Please subscribe here to get all future updates on this post/page/category/website

+919674493673

+919674493673  mailus@wbcsmadeeasy.in

mailus@wbcsmadeeasy.in