W.B.C.S. Examination Notes On – Source Coding Theorem – Computer Science Notes.

The Code produced by a discrete memoryless source, has to be efficiently represented, which is an important problem in communications. For this to happen, there are code words, which represent these source codes.Continue Reading W.B.C.S. Examination Notes On – Source Coding Theorem – Computer Science Notes.

For example, in telegraphy, we use Morse code, in which the alphabets are denoted by Marks and Spaces. If the letter E is considered, which is mostly used, it is denoted by “.” Whereas the letter Q which is rarely used, is denoted by “–.-”

Where Sk is the output of the discrete memoryless source and bk is the output of the source encoder which is represented by 0s and 1s.

The encoded sequence is such that it is conveniently decoded at the receiver.

Let us assume that the source has an alphabet with k different symbols and that the kth symbol Sk occurs with the probability Pk, where k = 0, 1…k-1.

Let the binary code word assigned to symbol Sk, by the encoder having length lk, measured in bits.

Hence, we define the average code word length L of the source encoder as

L represents the average number of bits per source symbol

If Lmin=minimumpossiblevalueofL¯¯¯¯Lmin=minimumpossiblevalueofL¯

Then coding efficiency can be defined as

With L¯¯¯¯≥LminL¯≥Lmin we will have η≤1η≤1

However, the source encoder is considered efficient when η=1η=1

For this, the value LminLmin has to be determined.

Let us refer to the definition, “Given a discrete memoryless source of entropy H(δ)H(δ), the average code-word length L for any source encoding is bounded as L¯¯¯¯≥H(δ)L¯≥H(δ).”

code QUEUEinexampleQUEUEinexample. Which means, the symbols in the code word are greater than or equal to the alphabets in the source code.

Hence with Lmin=H(δ)Lmin=H(δ), the efficiency of the source encoder in terms of Entropy H(δ)H(δ) may be written as

This source coding theorem is called as noiseless coding theorem as it establishes an error-free encoding. It is also called as Shannon’s first theorem.

source coding theorem In communication theory, the statement that the output of any information source having entropy H units per symbol can be encoded into an alphabet having N symbols in such a way that the source symbols are represented by codewords having a weighted average length not less than H/logN

(where the base of the logarithm is consistent with the entropy units). Also, that this limit can be approached arbitrarily closely, for any source, by suitable choice of a variable-length code and the use of a sufficiently long extension of the source (see source coding).

The theorem was first expounded and proved by Claude Elwood Shannon in 1948.

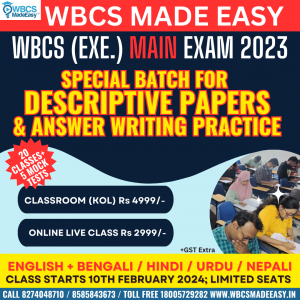

+919674493673

+919674493673  mailus@wbcsmadeeasy.in

mailus@wbcsmadeeasy.in